Difference between revisions of "Analyzing Word List Data"

John Carter (talk | contribs) |

|||

| (5 intermediate revisions by 2 users not shown) | |||

| Line 6: | Line 6: | ||

'''Introduction''' | '''Introduction''' | ||

| − | As we said in the introduction to these procedures, the most common reason to use word lists is to distinguish one variety from another and to decide when one variety is unintelligible to speakers of another. To do this, we analyse how similar one word list is to another and produce a lexical similarity percentage. We have to decide on a principle of comparison detailing the criteria we use to decide whether words are similar or not. This section will detail procedures you can carry out to achieve this. | + | As we said in [[Word Lists|the introduction to these procedures]], the most common reason to use word lists is to distinguish one variety from another and to decide when one variety is unintelligible to speakers of another. To do this, we analyse how similar one word list is to another and produce a lexical similarity percentage. We have to decide on a principle of comparison detailing the criteria we use to decide whether words are similar or not. This section will detail procedures you can carry out to achieve this. |

For further refence as you read these procedures, you can refer to the [[Field Guide Glossary]]. | For further refence as you read these procedures, you can refer to the [[Field Guide Glossary]]. | ||

==Data Entry== | ==Data Entry== | ||

| − | Type the transcriptions into a spreadsheet program such as Excel, or directly into [[WordSurv]]. If you use a spreadsheet program though, you will probably be more able to export the data into other tools that may make analysis easier, such as [[Phonology Assistant]]. | + | Type the transcriptions into a spreadsheet program such as Excel, or directly into [[WordSurv]]. If you use a spreadsheet program though, you will probably be more able to export the data into other tools that may make analysis easier, such as [[Phonology Assistant]]. For detailed instructions about inputting word lists into WordSurv 6.0.2, see [[Inputting Data into WordSurv]]. |

Make sure to use [[Unicode]] fonts for your [[IPA]] characters. If you don't, they may not display correctly on other computers. For instructions on entering IPA Unicode text, see our [[Typing IPA]] page. | Make sure to use [[Unicode]] fonts for your [[IPA]] characters. If you don't, they may not display correctly on other computers. For instructions on entering IPA Unicode text, see our [[Typing IPA]] page. | ||

| Line 50: | Line 50: | ||

#For the first word ‘I’ (first person singular), the form is unambiguously monosyllabic; thus no further analysis is required and these forms can be directly compared. | #For the first word ‘I’ (first person singular), the form is unambiguously monosyllabic; thus no further analysis is required and these forms can be directly compared. | ||

#For the words egg and seed, all of the varieties have generally similar forms. This disyllabic form is made up of a shared first weak syllable and two different major syllables following that. In this case, the weak syllable is lost in one case and including it would lead to an artificially lower lexical similarity percentage. It is further noted that the similarities between the form for both egg and seed may indicate that this is some sort of classification particle and should be eliminated from the lexicostatistic comparison; thus only the major syllable is compared. | #For the words egg and seed, all of the varieties have generally similar forms. This disyllabic form is made up of a shared first weak syllable and two different major syllables following that. In this case, the weak syllable is lost in one case and including it would lead to an artificially lower lexical similarity percentage. It is further noted that the similarities between the form for both egg and seed may indicate that this is some sort of classification particle and should be eliminated from the lexicostatistic comparison; thus only the major syllable is compared. | ||

| − | #The word forms for warm and sit are similar, with an apparent morphologic vowel particle | + | #The word forms for warm and sit are similar, with an apparent morphologic vowel particle at the end of the word, and an unusual form in two of the words ending in a glottal stop. |

| − | #Considering the word forms for leaf and root, it is apparent that there is an initial morpheme | + | #Considering the word forms for leaf and root, it is apparent that there is an initial morpheme having a meaning relating to 'tree'. This morpheme provides supplemental semantic information that is not necessary to the core meaning of the major syllable. Including it in the comparison would lead to an artificially higher lexical similarity percentage. Thus, this morpheme is ignored. |

Applying these basic steps, the data can be reduced to the roots by eliminating weak and supplemental syllables resulting in the following data: | Applying these basic steps, the data can be reduced to the roots by eliminating weak and supplemental syllables resulting in the following data: | ||

| Line 76: | Line 76: | ||

===The Blair Method=== | ===The Blair Method=== | ||

| − | A method which takes the middle ground between the Comparative Method and simple inspection has been developed in South Asia. It's based on the method outlined in Frank Blair's 1990 book ''Survey on a Shoestring''<ref name="blair" /> and is thus called the | + | A method which takes the middle ground between the [[Comparative Method]] and simple inspection has been developed in South Asia. It's based on the method outlined in Frank Blair's 1990 book ''Survey on a Shoestring''<ref name="blair" /> and is thus called the ''Blair Method''. |

| − | + | See the [[Blair Method]] page for detailed guidance on how comparisons are made between varieties. | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

===Levenshtein Distance Method=== | ===Levenshtein Distance Method=== | ||

| Line 190: | Line 85: | ||

===Computing the Percentage=== | ===Computing the Percentage=== | ||

| − | Whatever method is used to determine lexical similarity for word pairs, the method of computing the percentage is simple. Just divide the number of lexically similar items by the number of items you are comparing. In the example from the | + | Whatever method is used to determine lexical similarity for word pairs, the method of computing the percentage is simple. Just divide the number of lexically similar items by the number of items you are comparing. In the example from the end of the [[Blair method]] page, this would be 2 ÷ 3 = 0.667 = 67%. Of course, you would never do this with only three words, but you get the idea. Suppose instead that you had compared 98 pairs of words and found that 74 of them were lexically similar. Then your lexical similarity percentage would be 74 ÷ 98 = 0.755 = 76%. |

The Blair Method is applied to language varieties pairwise. That is, if you have 10 varieties you are comparing, you have to apply the method to each of the 45 unique pairs. Sometimes it is faster to do some of the steps for many varieties at once. Suppose again that you are comparing 10 varieties. For each item in the list, place each of the 10 words in a “correspondence set” (or “similarity set”). When items are clearly lexically similar (e.g. there are 5 phones and 4 of them are identical) or clearly not similar, there is no point in taking very much time writing which categories and sub-categories each segment are in. Just mark all the words that are clearly lexically similar with the letter “a”, indicating that they are in the same set. If there is more than one distinct set of similar words, use more letters to distinguish each set. Later, you can go back and figure out the categories for the remaining, less clear, words, and put them in the right sets. Doing this in [[WordSurv]] or Excel can speed the process of computing the lexical similarity percentages for all the possible pairs of varieties. [[WordSurv]] does it automatically, and Excel can be programmed to do so. Once you have computed the percentages for each pair of varieties, you can organize them in a matrix, such as Table 4. | The Blair Method is applied to language varieties pairwise. That is, if you have 10 varieties you are comparing, you have to apply the method to each of the 45 unique pairs. Sometimes it is faster to do some of the steps for many varieties at once. Suppose again that you are comparing 10 varieties. For each item in the list, place each of the 10 words in a “correspondence set” (or “similarity set”). When items are clearly lexically similar (e.g. there are 5 phones and 4 of them are identical) or clearly not similar, there is no point in taking very much time writing which categories and sub-categories each segment are in. Just mark all the words that are clearly lexically similar with the letter “a”, indicating that they are in the same set. If there is more than one distinct set of similar words, use more letters to distinguish each set. Later, you can go back and figure out the categories for the remaining, less clear, words, and put them in the right sets. Doing this in [[WordSurv]] or Excel can speed the process of computing the lexical similarity percentages for all the possible pairs of varieties. [[WordSurv]] does it automatically, and Excel can be programmed to do so. Once you have computed the percentages for each pair of varieties, you can organize them in a matrix, such as Table 4. | ||

| Line 206: | Line 101: | ||

==References== | ==References== | ||

<references /> | <references /> | ||

| − | [[Category: | + | [[Category:Word_Lists]] |

Latest revision as of 17:35, 6 August 2012

| Data Collection Tools | |

|---|---|

| |

| Interviews | |

| Observation | |

| Questionnaires | |

| Recorded Text Testing | |

| Sentence Repetition Testing | |

| Word Lists | |

| Participatory Methods | |

| Matched-Guise |

| Word Lists | |

|---|---|

| Preparing a Word List | |

| Collecting Word List Data | |

| Analyzing Word List Data | |

| Interpreting Word List Data | |

| Tips from the Field |

Introduction As we said in the introduction to these procedures, the most common reason to use word lists is to distinguish one variety from another and to decide when one variety is unintelligible to speakers of another. To do this, we analyse how similar one word list is to another and produce a lexical similarity percentage. We have to decide on a principle of comparison detailing the criteria we use to decide whether words are similar or not. This section will detail procedures you can carry out to achieve this.

For further refence as you read these procedures, you can refer to the Field Guide Glossary.

Contents

Data Entry

Type the transcriptions into a spreadsheet program such as Excel, or directly into WordSurv. If you use a spreadsheet program though, you will probably be more able to export the data into other tools that may make analysis easier, such as Phonology Assistant. For detailed instructions about inputting word lists into WordSurv 6.0.2, see Inputting Data into WordSurv.

Make sure to use Unicode fonts for your IPA characters. If you don't, they may not display correctly on other computers. For instructions on entering IPA Unicode text, see our Typing IPA page.

Type the transcriptions exactly as you wrote them in the field. You can revise them later based on the recordings you made (see the section on checking transcriptions below).

Transferring the Recording onto a Computer

If you used a digital recording device, transfer the data using either a USB cable or, if the data is stored on a memory card, a card reader. For tape recorders or older Minidisc (MD) players, you have to use a patch cord which goes from the headphone (or line-out) jack of the recorder to the microphone (or line-in) jack of the computer.

Use software such as SIL’s Speech Analyzer (SA) for recording sound on the computer as you play recording from the recorder. SA not only allows you to record sound, but also to view the waveform, pitch contours, spectrogram, and formants, as well. These can be extremely useful for analysis.

Transfer the recording into SA track by track, if using a digital recorder, or, if using a tape recorder, in chunks of a few minutes each. If you transfer it all at once, you will get a very large file that takes a long time to open and split up later. You will save time by recording in chunks now. Track by track is the best way to make sure you don't lose anything. You can set an MD player to play one-track-at-a-time. With a tape recorder, just transfer about 5 minutes at a time. If you happened to stop in the middle of a word, then rewind a little before transferring the next section to avoid losing any data.

See Speech Analyzer transfer settings for details about what settings to use in SA when transferring a recording. It is very important to make sure that the settings are checked, otherwise, you could end up with a poor quality transfer.

Checking the Transcriptions

Listen to the recordings in order to check the field transcriptions you earlier entered into the computer. Make sure you have used the same symbol for the same sound throughout and, as much as possible, phonetically accurate. You can use software like Speech Analyzer (SA) to do acoustic analysis to get a more accurate idea of the phonetics. The SA Help files are indeed very helpful for understanding acoustic phonetics. An excellent text to help you with this is Peter Ladefoged's 2003 book Phonetic Data Analysis.

Sometimes you will come across sounds that you will want to compare closely. Using SA, you can combine the recordings for two words into a separate file (this is even easier if you have split the recording up into separate files for each word). Then you can listen to the words one after the other, and compare the waveforms, pitch, formants, or whatever else you want.

Take some time at this stage to save some time later: split the recording up into separate files for each word in the list. These files should contain the word number, the English gloss, the elicitation prompt (all spoken by you), and the informant saying the word three times. There are many times when checking the transcriptions that you will want to quickly refer to another word and be able to listen to them one after the other. Doing this also means that researchers who later access the data can do so very efficiently.

Once you've transferred the data, make a backup copy of the transcriptions. It's vital to keep this in a separate place from the computer with the original files. If you back up a copy to the same hard drive or physical location as your original data and your computer equipment gets stolen or suffers a fault you might lose both your original and backup. Your backup is also useful in case you change something in your files and need to recover the original part or compare it with the original.

Identifying Roots

When eliciting words, you might get the word you want plus some extra information. For example, if you ask for the word for run, you might get run (going) or run (coming). When comparing the words for run across languages, the extra words for going or coming are irrelevant, and so should be dropped. Although you want to isolate run in your recording, don't cut or delete the extra information from your original data. This could be very useful for anyone researching this language in the future. Instead, make a copy of the original and then cut out the extra information in the copy.

Also, some languages have syllables that are added on before or after many different words. These are not part of the root and so should be dropped. Suppose that in two related varieties, many words have a nasal pre-syllable that is determined by the following consonant (e.g. mb, nd, or ŋg). If these were included in the comparison of words, they would artificially increase the lexical similarity, because they are the same in every word that has a nasal pre-syllable. Again, as explained in the previous paragraph, don't cut or delete extra information from the original files but make a copy to edit.

Thus, the first step in comparing the words is to reduce each transcription to its root form. If there is a common syllable added onto many words, drop it. If there are extra words and you know their meaning is not relevant, then drop them. If there are extra words whose meaning you do not know, then keep the pair of words that seem to be most similar.

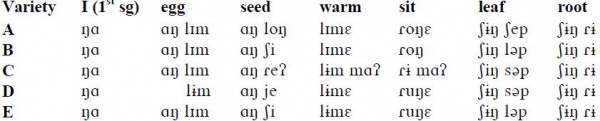

For example, consider the data below from Noel Mann:

Analysis for these data would be something like this:

- For the first word ‘I’ (first person singular), the form is unambiguously monosyllabic; thus no further analysis is required and these forms can be directly compared.

- For the words egg and seed, all of the varieties have generally similar forms. This disyllabic form is made up of a shared first weak syllable and two different major syllables following that. In this case, the weak syllable is lost in one case and including it would lead to an artificially lower lexical similarity percentage. It is further noted that the similarities between the form for both egg and seed may indicate that this is some sort of classification particle and should be eliminated from the lexicostatistic comparison; thus only the major syllable is compared.

- The word forms for warm and sit are similar, with an apparent morphologic vowel particle at the end of the word, and an unusual form in two of the words ending in a glottal stop.

- Considering the word forms for leaf and root, it is apparent that there is an initial morpheme having a meaning relating to 'tree'. This morpheme provides supplemental semantic information that is not necessary to the core meaning of the major syllable. Including it in the comparison would lead to an artificially higher lexical similarity percentage. Thus, this morpheme is ignored.

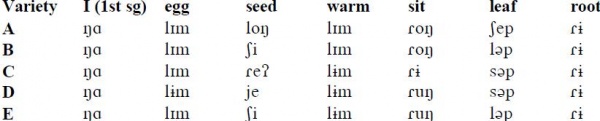

Applying these basic steps, the data can be reduced to the roots by eliminating weak and supplemental syllables resulting in the following data:

Borrowings

If the purpose of your survey is to investigate current intelligibility, the best way to treat borrowings is to always keep borrowings from older languages but always drop borrowings from more recently introduced languages. For more, refer to the information by Nahhas about borrowings.

Comparing Words

The final product we are after is a lexical similarity percentage for each pair of varieties being compared. To get these, we need to compare word pairs and then calculate the proportion of similar pairs in each list. There are many ways to compare words and some are listed in this section.

Inspection Method

This is the easiest, but the least accurate. The researcher simply looks at the pairs and decides which ones are similar and which ones are not. It might be useful for a quick count, to have an idea of what a more thorough analysis might reveal. But it should never be mistaken for a thorough method because it has no scientific criteria to judge relationships between words. It isn't reliable because every linguist who uses this method on the same set of words is likely to come to different conclusions.

Comparative Method

The most thorough way to decide which words are similar is through the use of the Comparative Method to establish which word pairs are cognate. In this case, if words are cognate, then they are considered to be lexically similar and the lexical similarity percentage can also be called a cognate percentage. However, as Frank Blair says in his 1990 book Survey on a Shoestring<ref name="blair">Blair, Frank. 1990. Survey on a Shoestring. Dallas: SIL.</ref>, “this process is often more time-consuming than a researcher on survey could desire. It also may require information not readily available to the surveyor.”

If the only use for the word list is to screen for low intelligibility, the Comparative Method may be too time-consuming and, as this method favours large lists of words, there may not be enough data from an intelligibility survey to apply it anyway. Surveyors should keep this end in mind when deciding how many words to collect. Collecting more data than you require will not only meet your needs adequately but can also better serve the greater linguistic community by enabling other types of analysis to be applied.

The Blair Method

A method which takes the middle ground between the Comparative Method and simple inspection has been developed in South Asia. It's based on the method outlined in Frank Blair's 1990 book Survey on a Shoestring<ref name="blair" /> and is thus called the Blair Method.

See the Blair Method page for detailed guidance on how comparisons are made between varieties.

Levenshtein Distance Method

See our Levenshtein Distance page for more info about this method.

Computing the Percentage

Whatever method is used to determine lexical similarity for word pairs, the method of computing the percentage is simple. Just divide the number of lexically similar items by the number of items you are comparing. In the example from the end of the Blair method page, this would be 2 ÷ 3 = 0.667 = 67%. Of course, you would never do this with only three words, but you get the idea. Suppose instead that you had compared 98 pairs of words and found that 74 of them were lexically similar. Then your lexical similarity percentage would be 74 ÷ 98 = 0.755 = 76%.

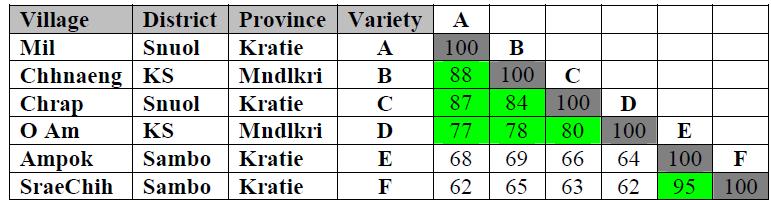

The Blair Method is applied to language varieties pairwise. That is, if you have 10 varieties you are comparing, you have to apply the method to each of the 45 unique pairs. Sometimes it is faster to do some of the steps for many varieties at once. Suppose again that you are comparing 10 varieties. For each item in the list, place each of the 10 words in a “correspondence set” (or “similarity set”). When items are clearly lexically similar (e.g. there are 5 phones and 4 of them are identical) or clearly not similar, there is no point in taking very much time writing which categories and sub-categories each segment are in. Just mark all the words that are clearly lexically similar with the letter “a”, indicating that they are in the same set. If there is more than one distinct set of similar words, use more letters to distinguish each set. Later, you can go back and figure out the categories for the remaining, less clear, words, and put them in the right sets. Doing this in WordSurv or Excel can speed the process of computing the lexical similarity percentages for all the possible pairs of varieties. WordSurv does it automatically, and Excel can be programmed to do so. Once you have computed the percentages for each pair of varieties, you can organize them in a matrix, such as Table 4.

Notice that the comparison of a variety with itself is always 100%. Also, the upper right of the matrix is blank. That is because these cells, if filled in, would be redundant. It is helpful to arrange the matrix such that the languages that group together lexically are next to each other. WordSurv has an algorithm which will do this automatically.

It is important, in constructing such a matrix, that all the percentages be based on roughly the same set of words. For more on why this is, see the The Variability of a Word List Percentage page. WordSurv calculates such a thing, but there are good reasons to think it dubious.

Syllostatistics

Noel Mann has modified the Blair Method using what he calls syllostatistics. In Southeast Asia, many languages are monosyllabic and comparing the corresponding onsets and rhymes of word pairs makes more sense than comparing individual phones. Thus, for a word like [naŋ], the comparison would consist of two segments, [na-] and [-ŋ], rather than three as in Blair’s Method. See the Syllostatistics page for more.

References

<references />